Rustat Report on Artificial Intelligence, Big Data and Healthcare

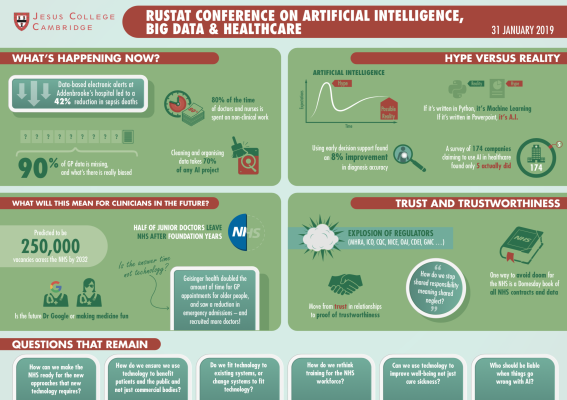

On 31 January 2019, the Rustat Conferences brought together experts from across academia, industry, the NHS, government, and enterprise, to discuss Artificial Intelligence (AI), Big Data (BD), and healthcare. The Conference infographic can be viewed here.

AI has been described by Dr Bertalan Meskó’s, The Medical Futurist, as “the stethoscope of the 21st Century”. However, despite this rhetoric and the increased discussion of these technologies in healthcare, development of the technology and its implementation are still very much in their early phases. Our experts considered the current reality across four sessions: where are we now; how this may change in the coming decade; the effects this is likely to have on patients, clinicians and healthcare systems; and how we might foster trust in any changes. Throughout the Conference, there was detailed discussion of the role of ethics, law, and regulation.

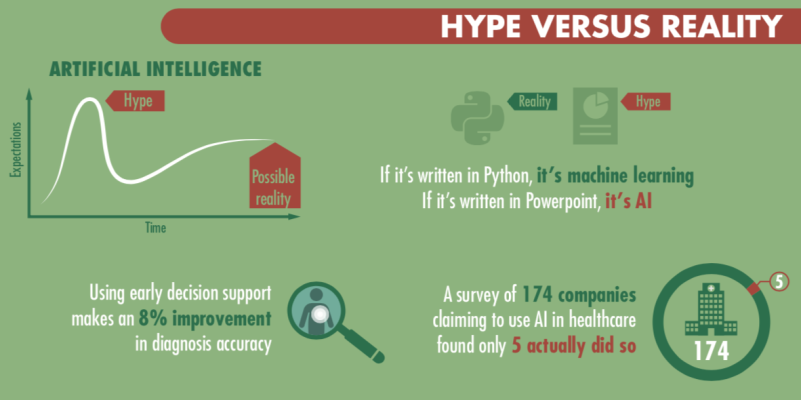

One theme that repeatedly arose was how technical terms are used – BD and AI are often conflated, either with each other, with any statistical approaches, or with any other digital approach to healthcare. As a result, many public references to AI are inaccurate. One expert cited that in a recent investigation of AI in healthcare they found that, of the 174 companies who identified as AI companies, only five companies were actually doing AI – the rest were just doing basic statistical analysis. As a result, our experts began by exploring the state of the art right now.

Where are we now?

One key industry leader summarised that while AI promises a lot, the results have been limited in terms of clinical impact to date. Certainly, machine learning can lead to diagnoses that are faster and more accurate than any clinician could achieve, and AI-enabled robots can perform complex surgeries swiftly and reliably. More broadly, extensive use of AI could result in higher quality care, greater health system sustainability, and reduced error. We know, for example, that AI can look at eye scans and use the data to detect sight-threatening diseaseand cardiovascular disease, while even predicting an individual’s age, gender, systolic blood pressure, and smoking status. An ophthalmologist is unable to do these latter predictions. AI can interpret information people cannot.

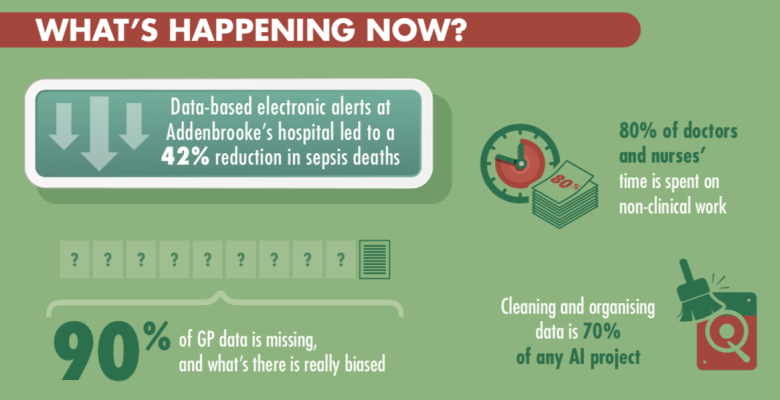

In terms of BD, there have been some tangible impacts, especially around low-level interventions. One example given was in decision support – helping people to make the right decisions or ask the right questions. In one case mortality rates fell by 42% following BD-informed prompts for front door staff in hospitals about the signs of sepsis. In another example, one of our experts highlighted that by providing GPs with early support, prompting them to ask the best questions for differential diagnosis, BD can improve the accuracy of diagnosis. In their study, they cited this was by 8%.

One concern that was raised throughout the Conference was the need to look at the entirety of the effects of a technological intervention – the culture of performance metrics currently used tend to focus on process, rather than the genuine experience and outcomes. They also tend to miss the true aims of the patient. It was highlighted that the financial consequences of new approaches on a strained healthcare system needed to be considered, especially if there was a high risk of false positives, which could lead to expensive and unneeded treatment. Changing pathways to fit new techniques was felt to be too often time-consuming and difficult across the NHS. Indeed, implementation of new developments in the NHS has proven difficult in the past; it took 35 years from cheap steroids used on sheep, to be deployed into the NHS as a life-saving treatment for women.

One challenge is how health data is currently collected in the NHS. An excessive focus on manual data collection can lead clinicians not to pay enough attention to the patient in front of them. In addition, there is often no clear definition or terminology for clinical observations. One clinician noted that often there are hundreds of thousands of data points from one admission, much of which is relatively low level. In primary care, one (unpublished) study found that roughly 90% of GP data is missing – and what is there is frequently biased, as it is filled in to support the diagnostic conclusion of the GP, rather than being a full description of the patient’s situation.

Our attendees noted the rise in personal health monitoring tools, such as Fitbits and Apple Watches, which generate data that GPs are often presented with in consultations. The proliferation of apps and devices that can be used by the public could produce further health inequalities, however, as it was noted “the people who need most help are often those who are the least connected”.

There was some discussion of the levels of hype currently surrounding AI, and it was noted that this was often driven by the need to attract funding. One expert noted that pitch success for start-ups in one study correlated to the frequency with which they mentioned AI. This excess of grand claims had, however, had a detrimental effect on genuine innovators, and had contributed to a decline in trust in the use of digital technology in healthcare.

What might be possible over the next decades?

The discussion moved to consider the future and what could be achieved using AI and BD over the coming decades. There was general agreement that there would be continued research and development, and that as a result more would transfer into clinical practice. However, much of the discussion focused on advances other than the new diagnostic opportunities that had been reported.

Our experts noted that the growing use of surgical robots would not only change clinical practice but would open up new data sources. For example, there had not previously been good data on the pressure surgeons applied, or the exact angles they used. This could be beneficial for training – or raise real concerns for surgeons of constant surveillance. The experts were generally sceptical that there would be fully autonomous surgical robots.

It was strongly argued that some of the biggest needs for AI and BD in healthcare were not directly clinical. There are significant opportunities to improve logistics, operational efficiency and workflow. One example that was given concerned the challenges of blood transportation, where hospitals need to predict the amount of plasma they will need - due to its short half-life, this can lead to significant wastage. It was suggested that using AI and approaches from other sectors, such as Amazon, could transform the accuracy and timeliness of delivery.

Another angle was using AI to revolutionise workflow and transform administrative processes, speeding up, or even taking on entirely, some tasks like producing rotas that occupy significant time for healthcare staff. It was pointed out that at present doctors spend some 70-80% of their time doing non-clinical tasks, and therefore care could be improved by taking away some of these tasks, and at the same time, improving quality of life for healthcare workers.

Although the press are largely interested in fancy new techniques, it was noted that “toys don’t always equal the best clinical outcomes” and therefore there needs to be a continued focus on the patient, not on publicity. It was argued that patients would benefit considerably from improvements in scheduling and reconciling calendaring, arranging appointment follow up times and bookings for transportation. However, it was highlighted that some use of technology to support patients could have undesired outcomes – an Alexa-based system to allow elderly people to call for help more easily if they fell led to an increase in falls as people became more willing to take risks that they wouldn’t otherwise have taken.

Another point emphasised was the capacity for technology to improve communication skills and empathy across the NHS. There are already trials using computer vision techniques that look to physical and verbal cues, giving feedback on empathy and implicit bias, including gender, ethnicity, and racial bias. This could have a significant role in the future in reducing inequalities in health and improve the patient experience as healthcare workers become better in talking to minorities or people not like themselves. It was suggested that AI could actually be deployed to make us better humans, developing further the functions we are good at and suggesting improvements where we falter.

What will this mean for the workforce of the future?

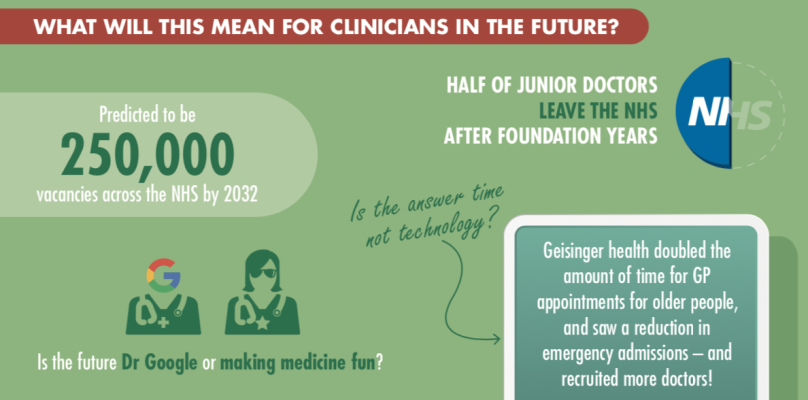

Adopting new technology can have significant effects on the workforce, and it was noted that we are in a period of especially low morale in medicine. Estimates suggest that the NHS could face a staff shortage of 250,000 by 2030, partly due to people leaving earlier than before, or working part-time. The pressure on those working in the NHS are growing, and there was a particular concern that increased connectivity, while bringing many benefits in terms of flexible working and increased diversity, is leading many clinicians to feel like they are always on call – leading to burnout.

Our experts were quick to note that AI and BD present us with an opportunity to consider a fundamental rethink of the role of a doctor. To what extent are they keepers of knowledge, and how much are they patient interfaces, who should focus on understanding the holistic human and communicating complex subjects like risk? It was suggested by one clinician that consulting ‘Dr Google’ was the best diagnostic aid at the moment; how might this change? It was noted, however, that there was a lot more to medicine than diagnosis and prescription, the areas where AI and BD are most likely to intervene.

This change in role raises a series of questions for the profession: How do we do we train people effectively? How might we build into the curriculum a skill set that evolves with technology rather than skills replaced by technology? How can we use the technology to build wellbeing for those working in healthcare as well as for patients?

The session emphasised the opportunities for technology to empower patients and clinicians. There was a lot of support for the use of these technologies to augment clinical experts, rather than to replace them. However, our experts noted that for this to work, there would need to be a major change in thinking and also in general clinical behaviours. One expert highlighted how one health system in the US has doubled the appointment time in primary care for the over-65s (from 20 to 40 minutes). This has led to significantly reduced emergency admissions, but has also made it easier to recruit and retain doctors, who feel less stressed and more able to help their patients. By deploying technology to do some of the more straight-forward tasks like writing rotas, time could be allocated to allow these longer consultations.

There was an emphasis on considering clinicians as humans, including using design thinking. The use of excessive pop-ups commonly leads to alert fatigue, when people stop paying attention to the information provided. The experts suggested that we therefore needed to think about how we augment healthcare work with input from AI, as well as how we collect the data we need from healthcare settings so as to avoid such human factors impacting on the quality of the data.

One major concern that was not resolved surrounded issues of liability when something goes wrong. Would a clinician be liable if an AI-based system made an error, and they simply followed its recommendations? What if they exercised their clinical judgment, and did not follow the AI suggestion? Would liability sit with the programmer of the AI system? The supplier of the data?

Ultimately, clinicians seemed optimistic that these technologies, rather than replacing them, could enhance their roles, and return some of the joy of being a medic.

How can we foster trust and trustworthiness?

There is a need for the health sector, perhaps more than almost any other, to be very careful about maintaining public trust. Although the medical professions are very highly trusted in the UK, the same cannot be said for technology companies. One dichotomy that was brought up was the idea that those in the public sector are virtuous and well-motivated, drive by the Hippocratic oath to act in patients’ best interests, whereas those in the private sector are driven purely by profit as their main motivator. This separation was criticised as being overly simplistic, but it does seem to relate to public attitudes as to whom they would like health information to be share with.

Baroness Onora O’Neill has drawn a distinction between trust – which is how people feel about an organisation or individual – with trustworthiness– the amount that they ought to trust them. Whereas trust can be driven by advertising campaigns and positive publicity, trustworthiness is a deeper and more important concept. One challenge that was raised is how to measure and communicate trustworthiness. There was discussion about the benefits of transparency in demonstrating trustworthiness, and a suggestion that there should be a transition from trust being defined by relationships to being supported by provability.

The Government has launched an initial code of conduct for data-driven health and care technologies, which sets out 10 principles that should be adhered to for any organisation working in this area. It was noted that there is already a legal framework covering some of these issues, but that it would also be beneficial to push for a higher standard. However, should this be a higher legal system, with the judicial consequences, or an unenforceable ethical standard? There was some discussion of the wide range of regulators already operating in this area, and that the risk that having too many interacting organisations could cause a lack of clarity and action. It was agreed that projects should be developed with ethical considerations right from the start. There was some disagreement as to whether the legal framework needed updating, or if the existing rules simply needed to be applied appropriately.

They emphasised, however, that there will always remain some grey areas, and therefore we need to create a framework that allows case-by-case evaluation, and also facilitates the capacity to have an informed debate. One solution was the “regulatory sandbox”, a safe space where stakeholders could talk about their work and work through the decision they are going to make with regulators, who offer support appropriately. Within this space, innovative thinking could take place, and the risk of being caught in a grey area significantly reduced.

One major challenge that was identified throughout the discussion was the current fragmented IT systems in the NHS, which makes it very difficult for anyone, inside or outside the NHS, to get hold of all the data that they need, anonymously or not. Data Trusts were mentioned as a possible solution to this, although there was a lack of clarity as to how they might actually operate. In addition, much of the data in the NHS is ‘dirty’, and typically when doing an AI project, about 70% of the time is spent curating and collecting the data. Our experts concluded that there is much work to be done before we can speak of AI and BD in healthcare as living up to the “hype” and bringing real improvements to patients on a daily basis.

About the Rustat Conference Report

A list of experts who attended the Conference is available here. The Agenda for discussion can be found here.

We would also like to thank our Rustat Conference Members who kindly support the Conferences and the Reports.

Authors: Dr Sarah Steele and Dr Julian Huppert

Infographic Design: Mat Hobson